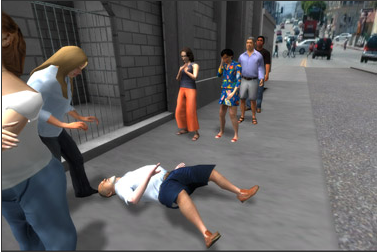

City noise fills the street as you wait in line for a small box of food. An argument breaks out over the last of the food distributions. A man collapses right in front of you, and you stand there suspended in time. You can’t do a thing to help.

Created by Nonny de la Peña, “Hunger in Los Angeles” is a 3D retelling of a scene outside of a Los Angeles food bank housed in a church. It’s the kind of scene we read about every day in newspapers, but the virtual audio and visual put you right into the world, seemingly while it is happening.

USC Annenberg describes the project:

De la Peña’s six-and-a-half minute interactive news piece allows one user at a time to enter a virtual reality, gaming-style environment set in the midst of a food bank distribution line located outside the First Unitarian Church, on 8th Street in Los Angeles.

During the eventful – and non-fiction – segment, chaos occurs when someone tries to steal food. A man in line falls to the ground in a diabetic coma. An ambulance and two paramedics arrive to assist.

The piece, like de la Pena’s other works and writing, explores the difference between the objective and the subjective, and re-imagines modes of creating and delivering contemporary journalism.

“Hunger” was crafted in part by using video game development and 3-D platform software, a head-mounted display and an audio recording made in 2009 by a student intern as part of the USC Annenberg journalism professor Sandy Tolan-led effort, “Hunger in the Golden State.”

Entering the experience is slightly disorienting; after slipping a backpack full of wires over your shoulders, your ears are encased in headphones and your eyes are covered by a visor. Then the simulation starts, as you stand in line with a group of digital people.

As a gamer, the first instinct I have in a virtual environment is to try to run, which was impossible given the constraints of the space. The second instinct was to look for some kind of objective, which is not the point of a simulation. The virtual reality part can be strange — there are in-world objects like curbs, cars and walls that do not provide the feeling one would expect. I also found myself trying to game the simulation, trying to separate my eyes from the visor, so I could have a better idea of where I was in the real world.

It’s clearly engaging. During the demonstration, someone nearly crashed into a wall. (I nearly did the same thing, despite the handler that follows you with the gear.) At points during the simulation, I crawled, put my hands through walls, knelt down to look at people on the ground and attempted to jump through walls. When the simulation ended, the action paused and an infographic lit up the screen.

After taking off the gear (and reorienting myself to reality, which took about two minutes), I interviewed de la Peña about the project, her team and what the future of journalism could feel like.

How did you come up with this project?

I started [my career in journalism] as correspondent for Newsweek, then I left that to do a documentary film. I always loved technology, but felt like I couldn’t do robust narrative issues with the platforms that existed. So I worked on a film called “Unconstitutional,” about post-9/11 civil rights abuses. Then I got a grant to create a virtual version of Guantanamo Bay prison. After we made that, I can remember the moment in my back yard where I thought “Holy shit, this is applicable to all journalism.” So, then I started thinking about spatial narratives, and what you call the “embodied edit” — there’s so much research about people and their connection to their virtual avatar. So how do you create a space where people have agency but are still acting within the space of the narrative?

[This is what we did in the Guantanamo project], you were placed in the body of the detainee. After planning out the project, we recorded audio and got actors to read the [Mohammad Al-] Qhatani [interrogation] transcripts, and in that project, you just were in it. [Participants] would hear [the interrogation] through the wall, and you could see yourself in a mirror and people reported feeling as if they were in that kneeling, tied position.I was a research fellow at the University of Southern California J-School, so there was a class being run by Sandy Tollen, about Hunger in the Golden State, [and the question was] how do you put people in this [experience]? You can’t make people feel hungry, but you can put them in the scene, so what’s the moment when the food runs out at the food bank. That’s what I thought I would do, but people kind of went silent at that moment.

I knew that from previous

People have whipped out their cellphones while in the simulation, and have tried to comfort people. Kids are funny, as they will look at [the virtual] adults to figure out what to do.

Can you walk me through the technology?

We started with a zoom microphone recording the scenes on the street. We didn’t do a lot of spatial sound redevelopment. [The visuals] were built in Unity 3D. I did the first version with some javascript; Bradley took over in C#. The cool thing about building in Unity, you can spit it out on the Kinect camera — you don’t even need the Xbox, you can view it on your laptop. The Kinect experience [for the Hunger project] is a bit tough because we don’t have the gestures right yet.

What were the challenges with building this project?

We had to make some hard choices, editorially. That guy [who has a seizure] — he actually does get revived, and manages to leave. But we didn’t have the money and time to code all that so it became an editing decision. I didn’t realize how much that would impact people, not knowing how the man’s story would resolve. A woman had a personal experience like that with someone with diabetes, and she came out of the experience crying.

Also, the usual problems with space, time and finding enough interns.

How much did all of this cost to put together?

I spent about $700 of my own money. It’s just the components, the motion sensor system. Unity 3D is open source. My guess is you could do this, depending on skill level and team, for about that.

Why do you think immersive experiences are important to the future of journalism?

I have no doubt that this is the future of journalism. We need to establish best practices. The power of this stuff is so huge, we need to be thinking about what our responsibility is as journalists, what decisions are we making. I certainly learned a huge lesson here.